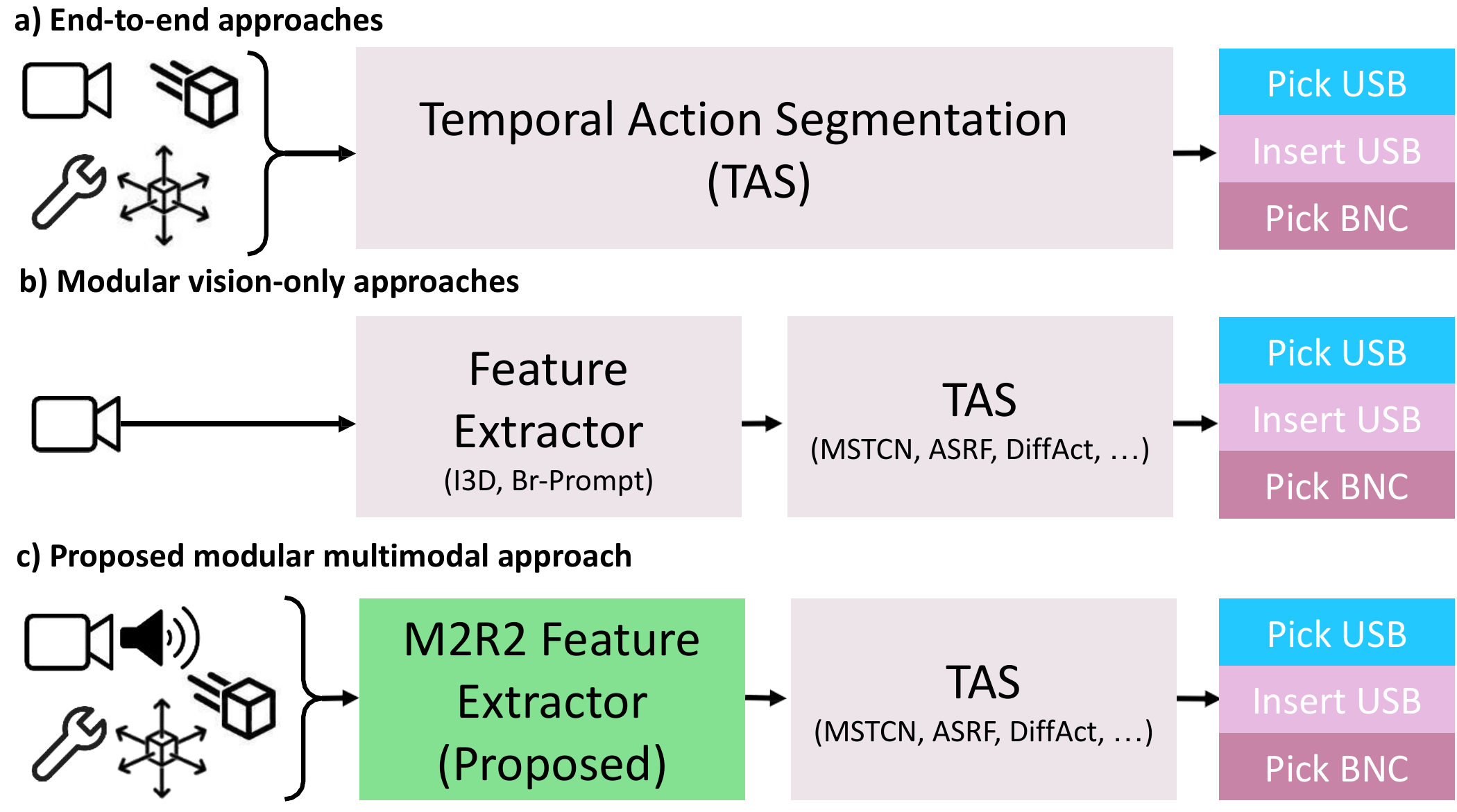

- Jun 2026: M2R2 has been accepted to ICRA 2026!

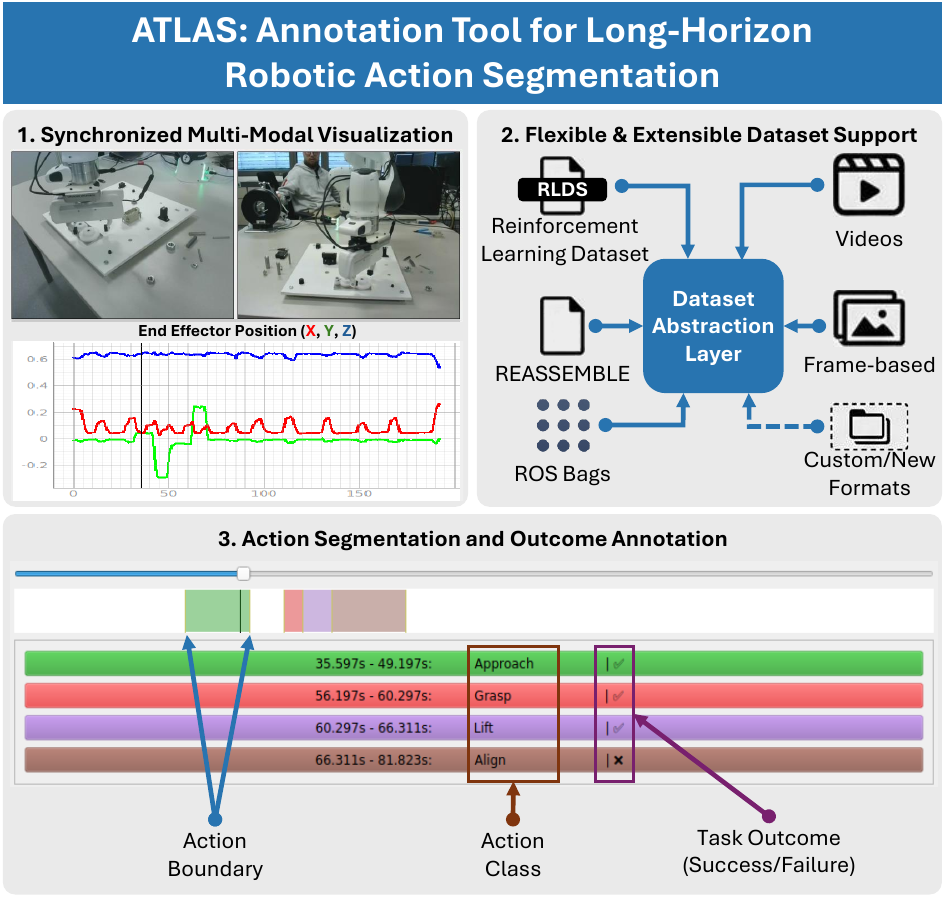

- Jun 2026: ATLAS has been accepted to ARSO 2026!

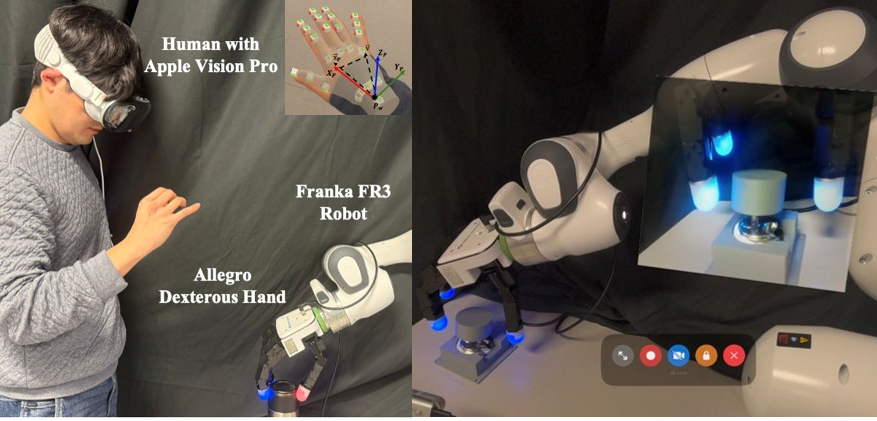

- Jun 2026: DexTwist has been accepted to ARSO 2026!

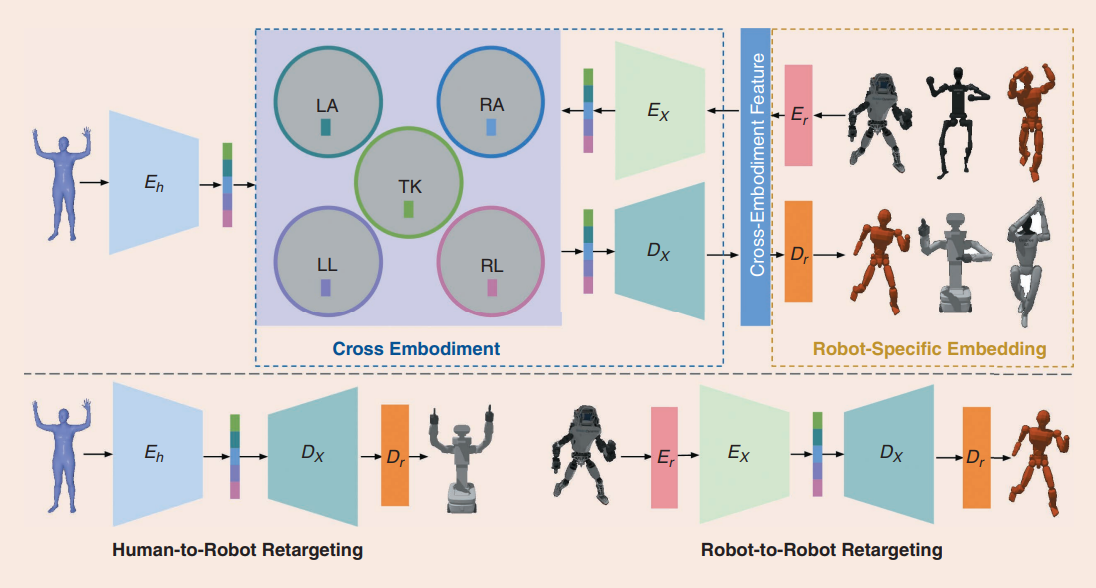

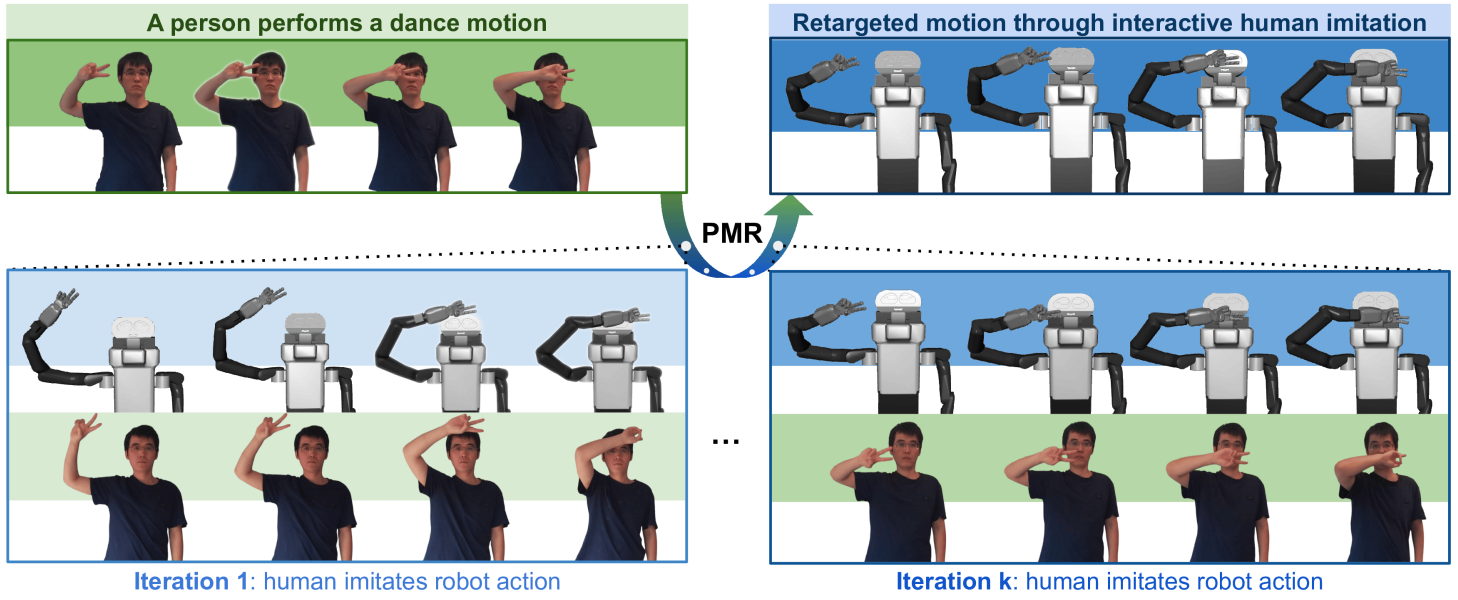

- Feb 2026: Cross-Embodiment Imitation has been accepted to IEEE Robotics & Automation Magazine!

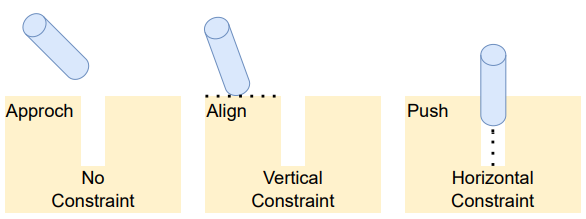

- Nov 2025: Our “Constraint-Informed Temporal Action Segmentation” paper was presented at ICCAS 2025.

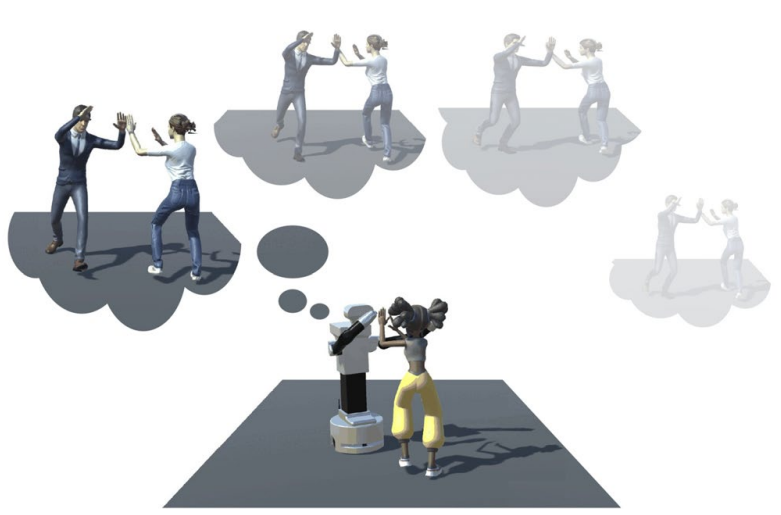

- Nov 2025: Our “Robot Behavior Generation for Social Human-Robot Interaction” paper was published in International Journal of Social Robotics!

- Oct 2025: Prof. Dongheui Lee gave a Keynote Talk at IROS2026.

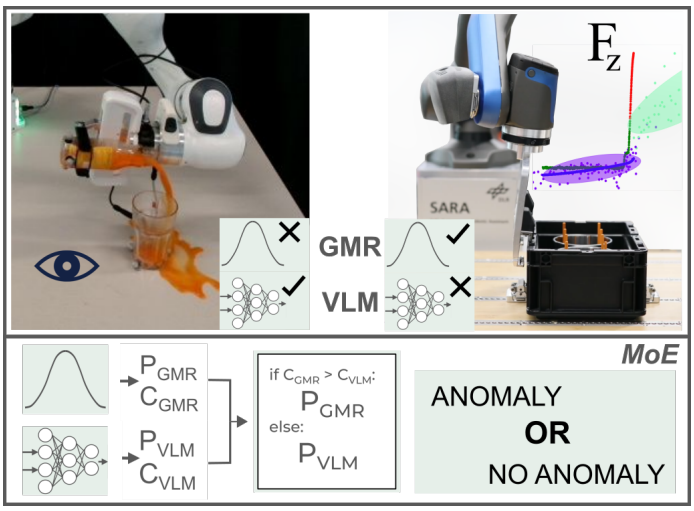

- Oct 2025: Our “Multimodal Anomaly Detection with a Mixture-of-Experts” paper was accepted at IROS 2025.

- Sep 2025: Our “Personalized Motion Retargeting through Bidirectional Human-Robot Imitation” paper was accepted at ICDL 2025.

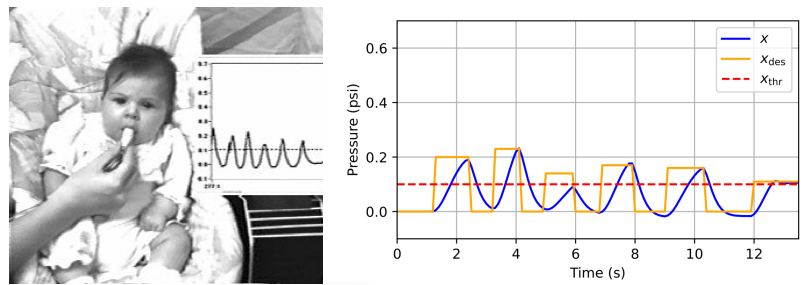

- Sep 2025: Our “Computational models of the emergence of self-exploration in 2-month-old infants” paper was accepted at ICDL 2025.

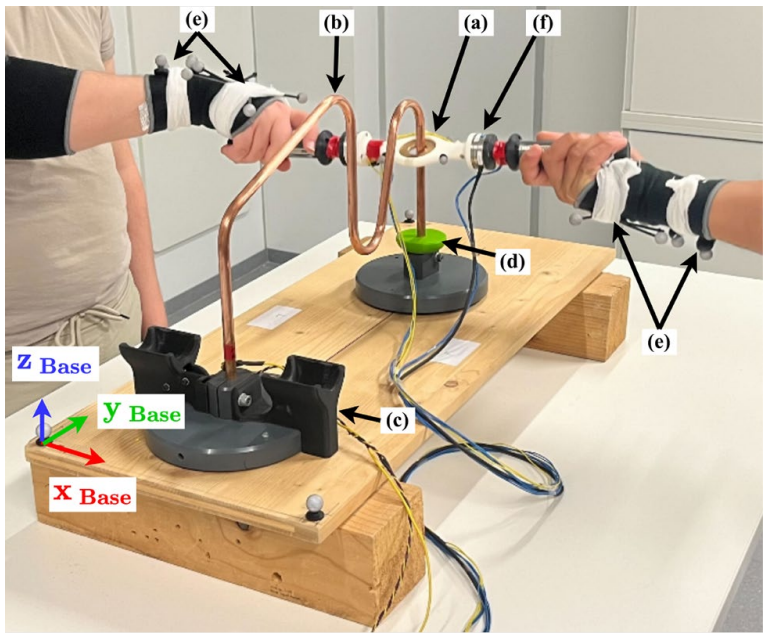

- Jul 2025: Our “Partner familiarity enhances performance in a manual precision task” paper was published in Scientific Reports!

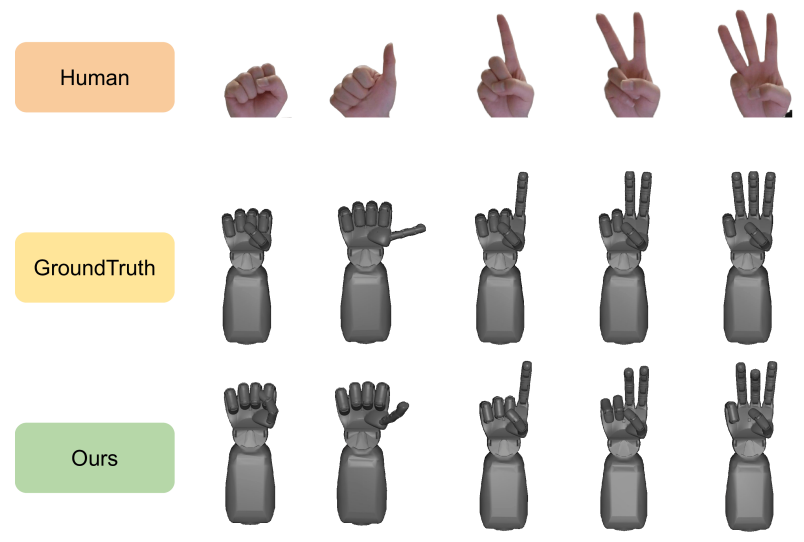

- Jun 2025: Our “Learning dexterous robot hand control by imitating human hands” paper was accepted at UR 2025.

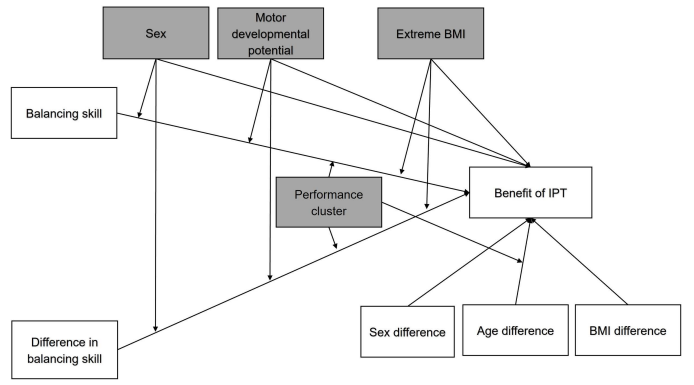

- Jun 2025: Our “The balance stabilising benefit of social touch” paper was published in PLOS ONE!

- Mar 2025: Our paper on prioritized output tracking control was published in IEEE Transactions on Automatic Control!

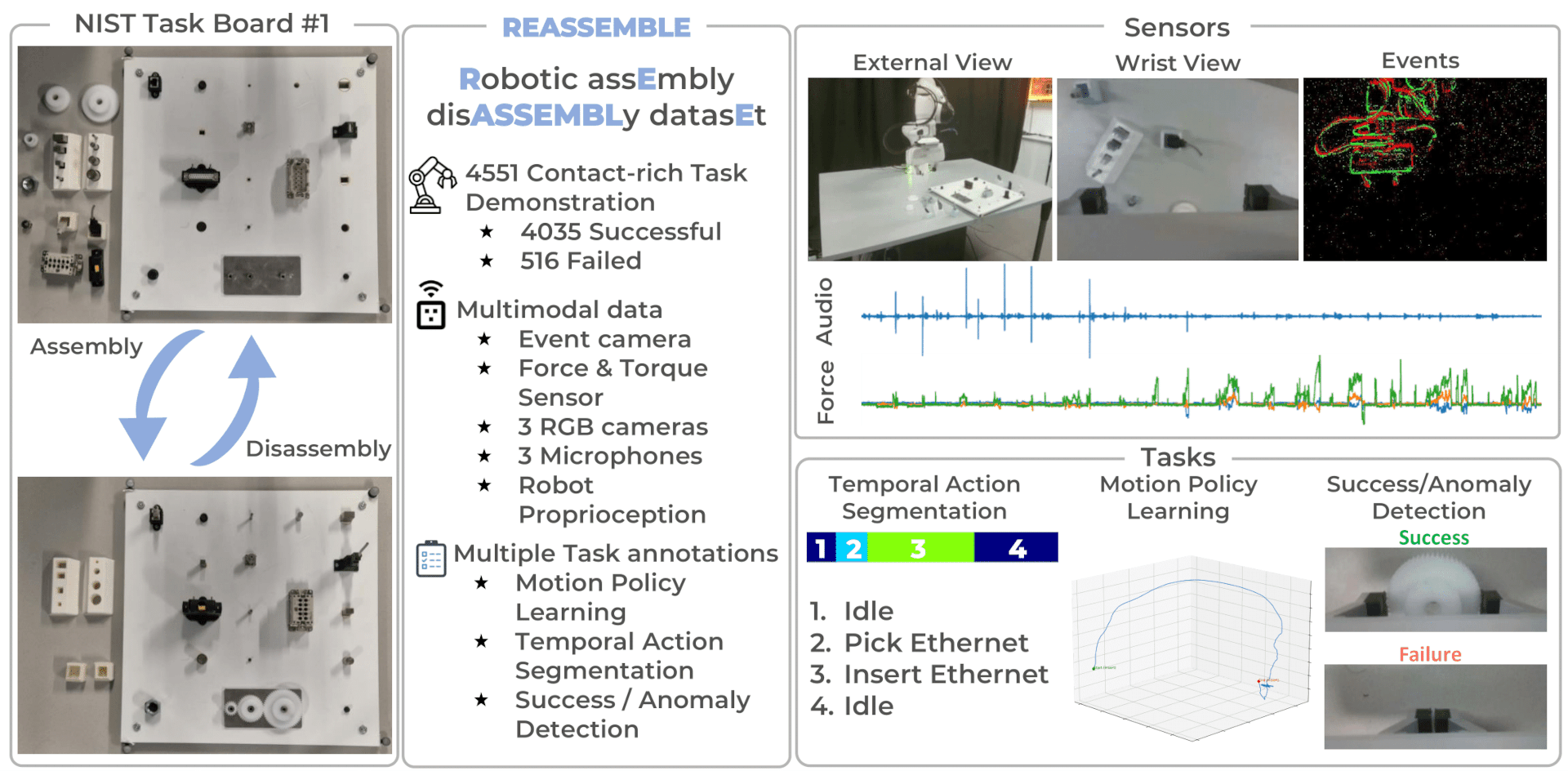

- Feb 2025: We are releasing the REASSEMBLE dataset.

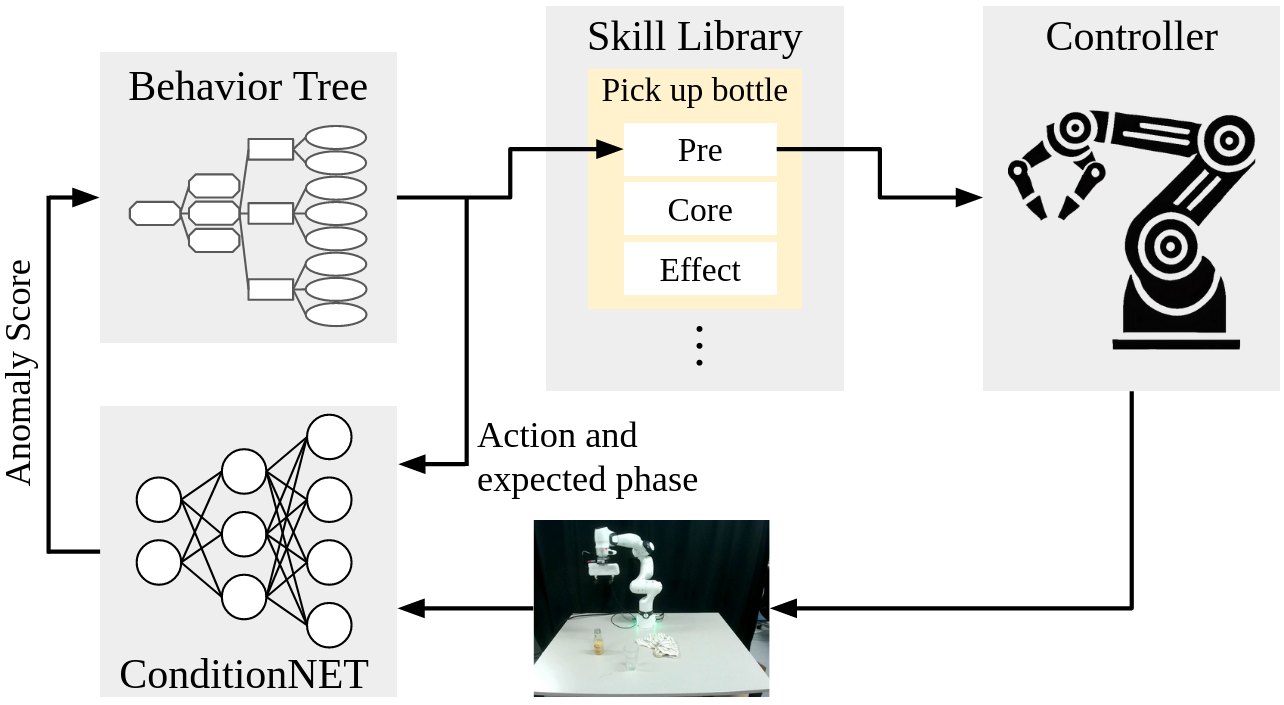

- Feb 2025: Our ConditionNET paper got accepted at Robotics and Automation Letters!

- Jan 2025: I-CTRL has been accepted to RAM Journal!

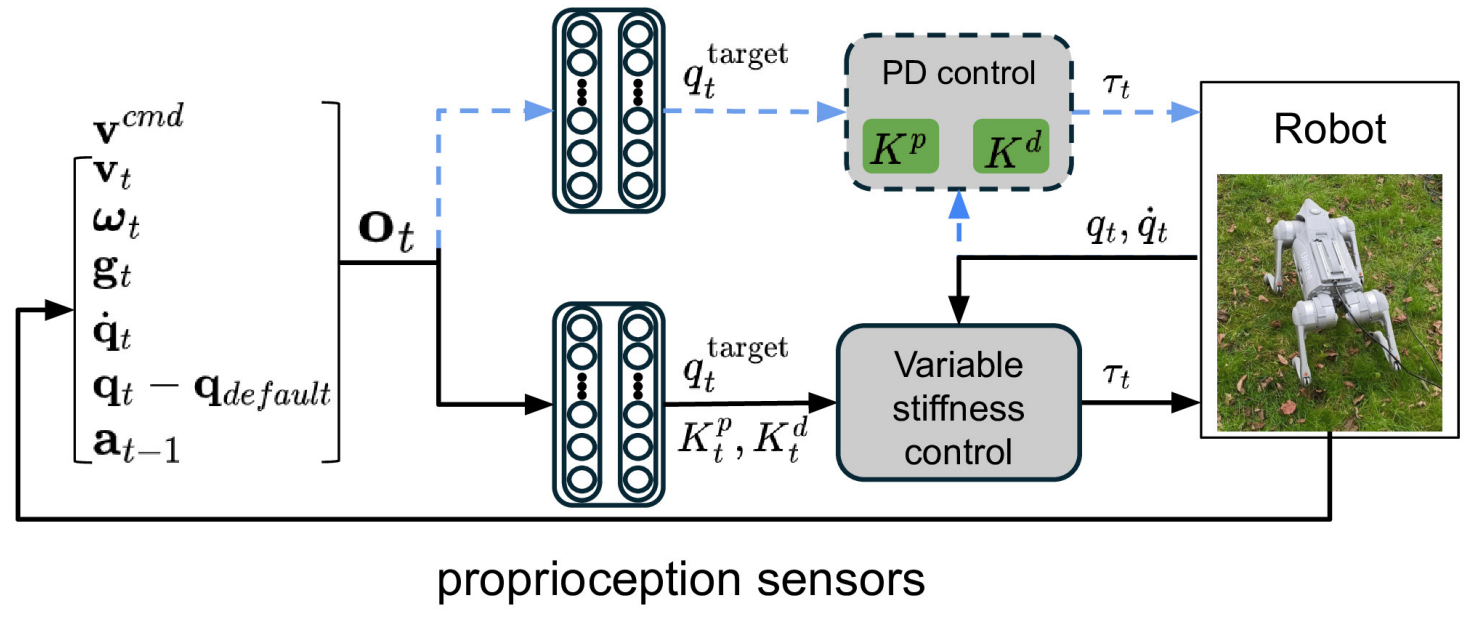

- Jan 2025: Our “Variable Stiffness for Robust Locomotion through Reinforcement Learning” paper was published in IFAC!

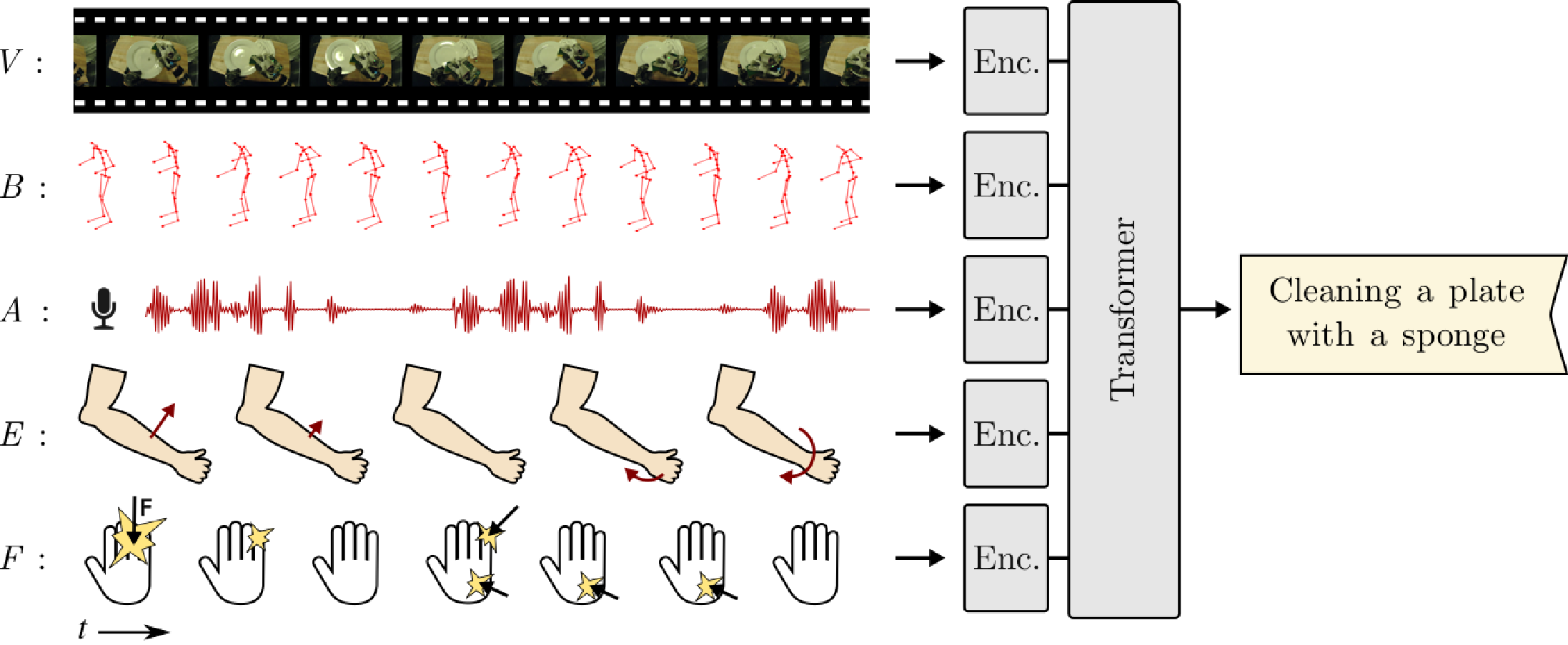

- Dec 2024: Our “Multimodal Transformer Models for Human Action Classification” paper at RiTA has won the reward of the Best Intelligence Paper.

- Sep 2024: Our “Multimodal Transformer Models for Human Action Classification” paper was accepted at RiTA.

- Sep 2024: Self-AWare has been accepted to Humanoids 2024 and HFR 2024.

- Jun 2024: I-CTRL is out! Take a look to control any bipedal humanoid robots by imitating any human motion.

- Dec 2023: SALADS has been accepted to ICRA 2024.

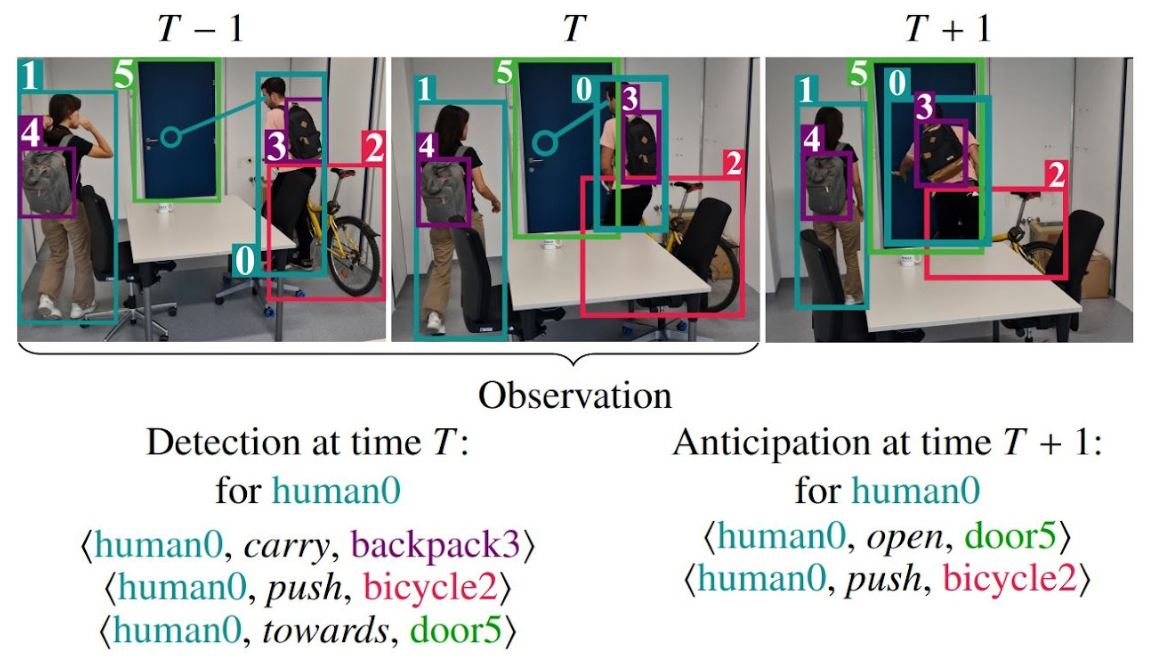

- Dec 2023: ECHO has been accepted to ICRA 2024.

- Dec 2023: UNIMASK-M has been accepted to AAAI 2024.

- Sep 2023: ImitationNet has been accepted to Humanoids 2023.

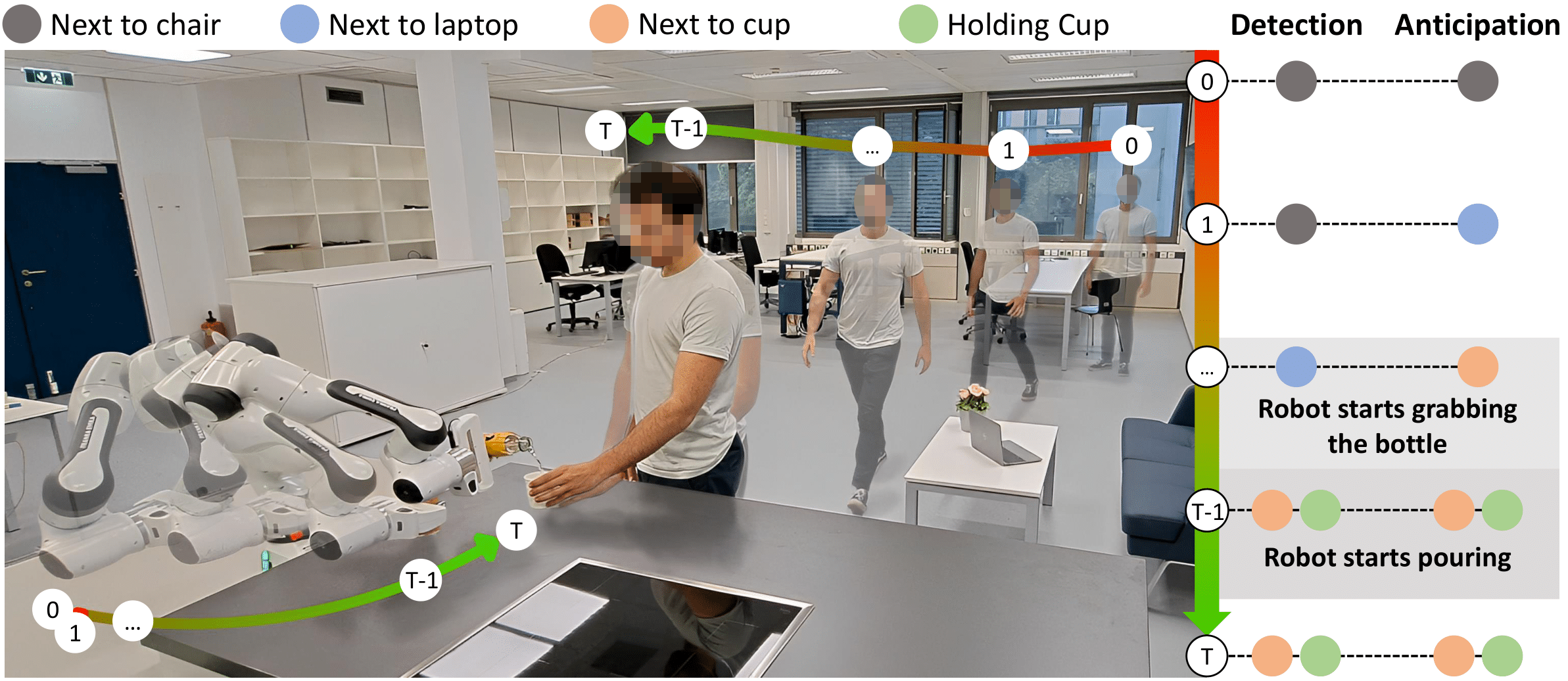

- Sep 2023: HOI4ABOT has been accepted to CoRL 2023.

- June 2023: HOI-Gaze has been accepted to CVIU Journal 2023.

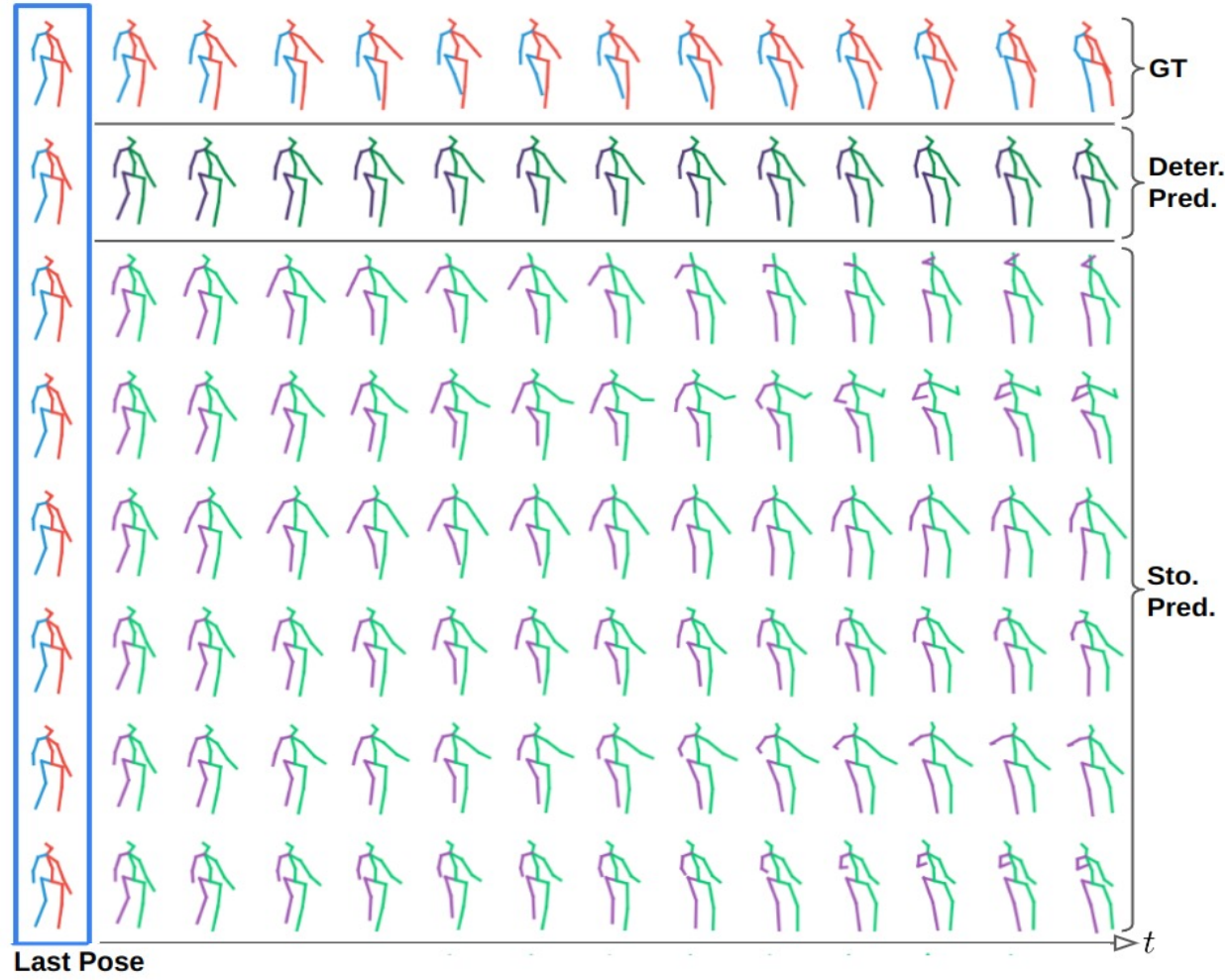

- Jan 2023: DiffusionMotion has been accepted to ICRA 2023.

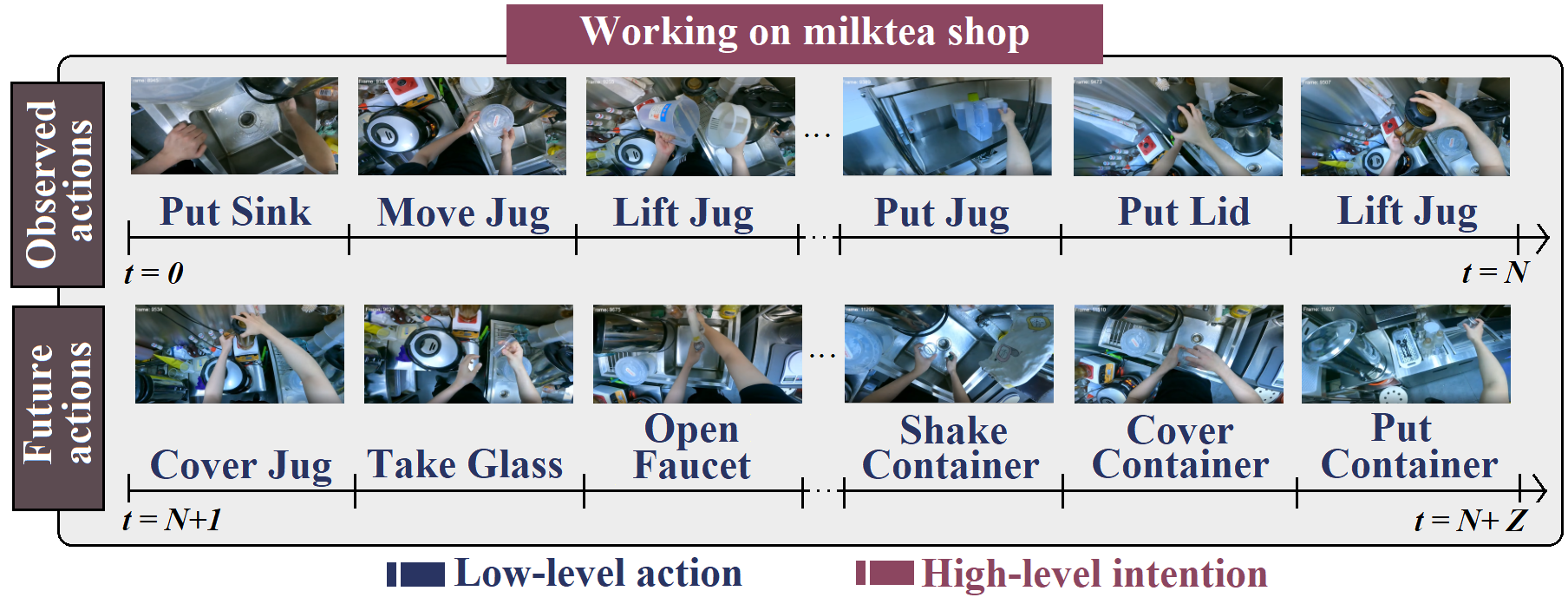

- Nov 2022: I-CVAE has been accepted to WACV 2023.

- Oct 2022: We won the ECCV@2022 Ego4D Long-Term Action Anticipation Challenge: First Place Award with I-CVAE.

- Jun 2022: We won the CVPR@2022 Ego4D Long-Term Action Anticipation Challenge: First Place Award with I-CVAE.

- Apr 2022: 2CHTR has been accepted to IROS 2022.

Autonomous Systems Lab

Autonomous Systems Lab

At the Autonomous Systems Lab we aim at innovative research on cognitive robot motor skill learning and control based on human motion understanding. The main focus of the research is two-fold: autonomous learning from observations in daily life and cognitive robot control. In order to realize an intuitive robot and to satisfy humans expectations for a robotic companion, we study about human beings and transfer the discovered mechanisms to robotic systems. In this way, the robot can learn new skills without engineers programming and learn complicate tasks incrementally in a generalized framework. Especially by bridging learning from observations, robot motor control, and learning from self practices, robots will be capable of performing complex tasks robustly under uncertainties.